VSCO Film X & The Imaging Lab

All images by Zach Hodges unless otherwise noted.

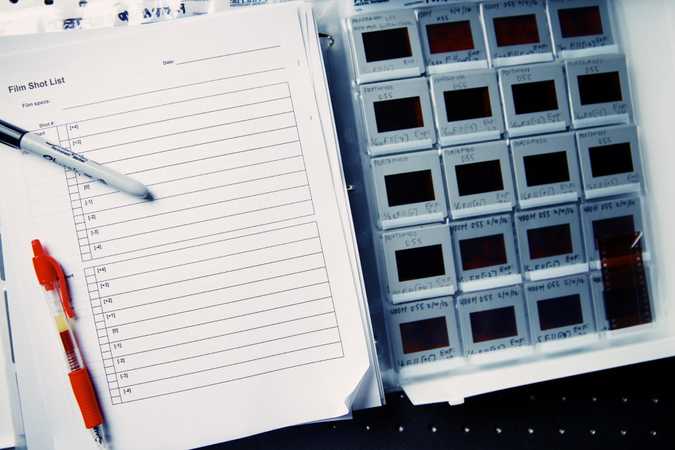

Film measurement log sheet and slides

Film measurement log sheet and slides

Introduction

When you open up the VSCO app and load a photo into the editor, one of the first things you see is filters. The first group has a white background and colored letters, and they are different from all the rest. These are the “Film X” filters. While they look much like the others, these presets are the result of a 4-year labor of love.

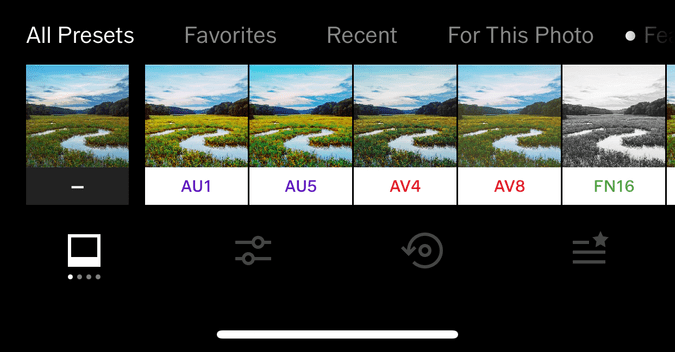

Film X filters in the VSCO app

Film X filters in the VSCO app

How it started

It all started in 2011 with the original VSCO Film product we released for Adobe Lightroom that launched our company. By the end of 2012, the product’s creator, Zach Hodges, had developed an intense film testing method that delivered good results. However, it was highly labor-intensive, and the result was limited by the platform it was made for—and restricted to it. What’s more, many films were getting hard to find and even what was available was starting to degrade beyond usefulness. That’s when we realized the importance of the measurements we were taking, because we may not get to measure twice.

For us, that realization felt like an imperative. We wanted to capture as many films as we could before they were gone—and really capture them, so that what we made would be indistinguishable from real film, not just “close enough.” We wanted it to be infinitely reproducible on any software platform, with any camera, at any time. So, we amassed a huge pile of film, put it in a freezer, and got to work researching.

There must be a better way

Initially, we assumed that someone must have mapped out the look of different films before us. We searched high and low, talking to any expert we could find in search of answers, but every lead turned into a dead end. After some time, we realized we couldn’t bring this vision to life on our own. We understood the general concept of what we wanted to achieve, but it was clear that we were way out of our depth with the science. Fortunately, because of all our searching, we also knew where the answers were: with the color scientists. It turns out that vision is extremely complicated, and therefore reproducing what we see is also extremely complicated. Thus, there’s a whole field of science devoted to it. (You’re experiencing the benefits of it right now reading this screen.)

Building a spectral model

It was 2014 when we started looking for a color scientist, and connected with Rohit Patil. With a graduate degree in Color Science, and a background doing color modeling with other mediums, he was the needle in a haystack we desperately needed. He brought the knowledge we had been missing and a vision for the project soon began to take shape.

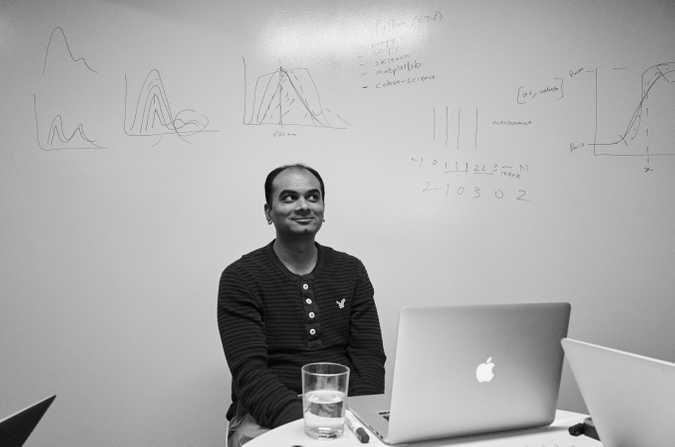

Rohit Patil during the development of Film X

Rohit Patil during the development of Film X

We needed to build a physical model of how film responds to wavelengths of light. A “spectral” model, unshackled from any camera, chart, media type, or platform. Such a model would allow us to pass any “light spectrum” through it and get a “film output”. With this kind of a model, we’d be able to do everything we wanted and more. Additionally, any future engineer could use the data to bring the look of film to any future camera or platform. To make this spectral model, we also needed a process that could gather the needed data from a single roll of film, since that was sometimes all we had.

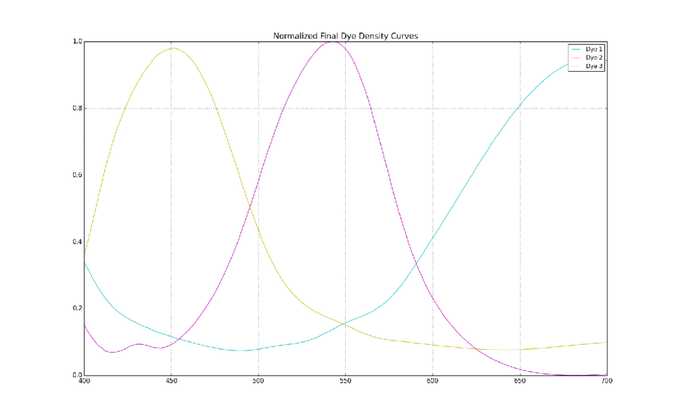

Example of measured spectral sensitivities of color film dyes

Example of measured spectral sensitivities of color film dyes

Film is made up of multiple layers that cannot simply be peeled apart, so our first challenge was working out how to measure the separate components of a film. We would have to measure them all at once to document how they all reacted to light. We were going to have to determine the spectral sensitivities of each layer, as well as the spectral properties of the resulting dyes after the film is processed that create the final image. During this time of broad research, it felt like we were grappling in the dark, hoping this would all somehow work.

Outfitting the imaging lab

As we gathered all of the custom (and expensive) equipment we needed piece by piece, we quickly realized that we needed a place to put it all. (This had all been happening in a dusty storage closet initially.) Luckily, around this same time, VSCO had grown out of its old space, and we were building out a new office in Oakland, California. So, we asked for an imaging lab, and were able to incorporate it into the plan.

A year later, we finally moved into our beautiful new lab, and it was a massive improvement. The lab is actually 3 rooms: a large, central working area, a film processing (or “wet”) lab, and a black room.

We needed our own wet lab to get fast and reliable results. Lia Cecaci, from our support team, stepped up originally and got everything up and running, including the arduous fire code compliance. She eventually handed the processing off to Lucciana Caselli, who skillfully handles it now.

VSCO’s wet film lab. Image by Lucciana Caselli.

VSCO’s wet film lab. Image by Lucciana Caselli.

We also built the black room—a special, light-sealed room with non-reflective black walls, ceilings and floors to ensure that our spectral data has no stray light reflections to contaminate the results. This “black box” is where much of the work of exposing and measuring the film happens.

The “Black Box” near the end of construction.

The “Black Box” near the end of construction.

In between the wet lab and black room, there’s a large working area for desks, whiteboards, a couch, scanners, and that same freezer we bought back in 2012, full of about 100 different kinds of film. The room contains several scanners, but the most important are the two old Fuji Frontier units—one plus a backup in case the first one breaks. These have been popular in commercial film labs for digitizing film since the early 2000’s, and are still used by labs to this day as the look they impart on their scans is beloved within the film community.

VSCO Imaging Lab working area. Image by Lucciana Caselli.

VSCO Imaging Lab working area. Image by Lucciana Caselli.

Once our new lab was up and running, we ran into another problem: Doing the measurements manually was extremely tedious, and the sheer amount of work before us was not practical. We needed an automated measuring system for film, but that didn’t exist. Thankfully, we had recently recruited some interns from the Multimedia and Creative Technologies department at USC. One was Yuan Liu, who was a film fanatic himself. With a background in digital signal processing, image processing and hardware-related programming, Yuan brought all the knowledge we needed to take the next step. He built an Arduino robotic measurement system to help us automate the process of measuring the film with the spectroradiometer to collect the spectral data. The time required to measure each film went from days to hours. He then created our model of the Fuji Frontier scanner, a crucial last piece of the puzzle. Yuan quickly made himself crucial to the project, and ultimately became the fourth member of our Film X Project Team.

Fuji Frontier Film Scanner. Image by Lucciana Caselli.

Fuji Frontier Film Scanner. Image by Lucciana Caselli.

At last, the four of us had the first end-to-end model of a film (Kodak Portra 400) that was somewhat decent, but of course, another big problem became clearer: the application of the model to digital images. Since our model was based on physical light, we needed a way to feed linear raw image data (AKA Scene-referred data) into the model to apply to digital images for accurate results. Thus, we also set out to find a reliable way to control raw rendering, which led us to Apple’s raw framework in OS X. We were able to do what we needed with this, and it became the basis for our testing. At the time, there was nothing like this on any mobile device, so we knew we would need a better solution eventually. But, for the purposes of testing and refining the film model, we pressed on with Apple raw.

An interactive film model

When we finally got a film model output that we felt was presentable, another big problem presented itself: modeling just one exposure of the film doesn’t come close to capturing the possible looks a photographer can achieve with it. In fact, through under- and overexposure, a single film can deliver a wide range of looks. We realized we needed to model them all. We needed an interactive film model, so we set about adding changes of exposure to our film model, shooting several stops over and under. We call it “character,” since the character of a film changes so much with exposure.

Once that was complete, we realized that the balance between the three color channels (red, green, blue, or RGB) changed dramatically with exposure. For this reason (and many others), commercial film scanners include controls to balance the three channels for each picture. Since every scene responds a bit differently to the changes in the channel balance, a “one-size-fits-all” setting did not work well. Our film model also needed another level of interaction: “warmth”, which models changes to the scanner model.

As we drew closer to what we felt was a releasable product, another lucky break rewarded our persistence: Apple was bringing its raw framework to iOS 10! This meant we could bring the full experience of the film model on the raw images to mobile devices in the VSCO app. But this presented one final challenge: the entire infrastructure of the editing portion of the app would have to be rebuilt to support this new functionality. So, we set to work, but we needed the help of our software engineer Jason Gallagher, who believed in the project so much that he literally worked through the weekend to get the whole project over the finish line in time for launch. On December 7th, 2016, Film X finally launched to the public with 5 films.

What the process looks like today

When Rohit joined the team, we asked for a year to complete the project. The Film X piece ended up taking two years for the negative film, and another six months for positive slide film.

This Wired article explains it all in more detail, but the basics are that we load each film into our Canon EOS 3 SLR camera with a Zeiss 50mm lens. In the black room, we photograph test charts and scenes that have been specifically selected to include the full range visible light spectrum, color combinations and a variety of skin tones.

Next, we develop the film in the wet lab, and then put it through the measurement system to measure it, which can take up to 30-40 minutes for each frame of film. Then, we also scan these frames using the Frontier (or other) scanner. All this data then goes to our custom software, which uses a combination of color science, statistics, and machine learning to figure out the spectral properties of the film including dye densities and film spectral sensitivities.

The result, at long last, is a rigorous spectral model, a digital representation of how film responds to light itself. Point your digital camera at any scene, and the film model can figure out how film would have responded to that same scene. We then turn that result into a filter for the VSCO app.

At the time of this writing, over 7 years from when we began this project, we have now released 38 Film X filters through this process into this new architecture. We can shoot and import RAW and JPEG files and apply the most accurate, interactive film model ever created to them… on our phones. If anyone out there is half as happy about that as we are, then all this work was worth it.

The main thing we’re left with is a sense of accomplishment and pride. To our knowledge, no one besides film manufacturers has ever even done this kind of work. We modeled film based on how it reacts to light itself. It was extremely difficult to do, and we’re extremely proud of it. Louis Pasteur said “Fortune favors the prepared mind,” and that feels especially true with this project.

So next time you open the VSCO app and see those white filters staring back at you, give them a try, and enjoy the fruits of our labor: Film X.

Film freezer full of preserved film.

Film freezer full of preserved film.

Tools in the film development lab.

Tools in the film development lab.

Measuring spectral properties of film.

Measuring spectral properties of film.

Measuring spectral properties of film.

Measuring spectral properties of film.

If you find this kind of work interesting, come join us!