Using On-Device Machine Learning to Suggest Presets for Images in VSCO

At VSCO, we’re using machine learning (ML) in many different ways. One of the most exciting is our latest feature taking advantage of on-device ML: For This Photo. It’s a great solution to a complex problem, and it also combines exciting areas of technology for us.

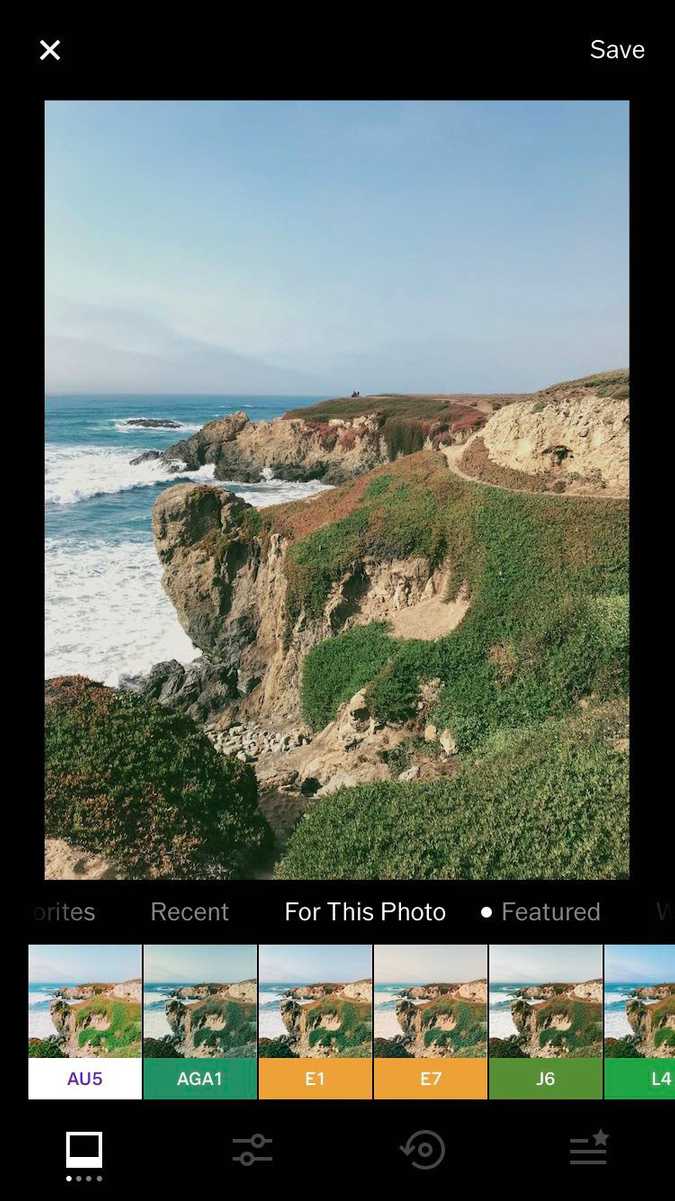

“For This Photo” feature

The Challenge

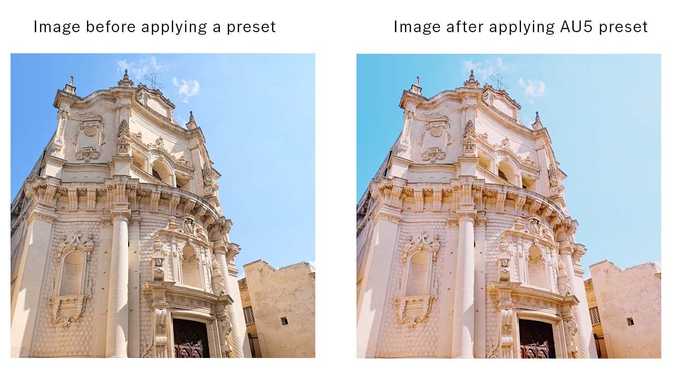

VSCO has a catalog of over 200 presets, which are pre-configured editing adjustments to help our users transform their images. These include emulations of old film camera stock such as Kodak Kodachrome 25, Fujifilm Velvia 50, Agfa Ultra 50, and more.

With so many presets to choose from, our research suggested members were overwhelmed and stuck to using the few favorites they knew and liked, instead of trying new presets.

The Solution

Our challenge was to overcome decision fatigue by providing trusted guidance and encouraging discovery, while still leaving space for our users to be creative in how they edit their images. We decided to solve this problem by suggesting presets for images. We developed the “For This Photo” feature, which uses on-device ML to identify what kind of photo a user is editing and then suggest relevant presets from a curated list. The feature has been so loved by our users that “For This Photo” is now the second most used preset category second only to showing all available presets.

“For This Photo” in action. Gif by Augustine Ortiz

We decided to use deep convolutional neural network (CNN) models because these models can understand a lot of the nuances in the images, making categorization easier and faster than traditional computer vision algorithms. Our Imaging team thoughtfully curated presets that complement different types of images, allowing us to deliver a personalized recommendation for each photo.

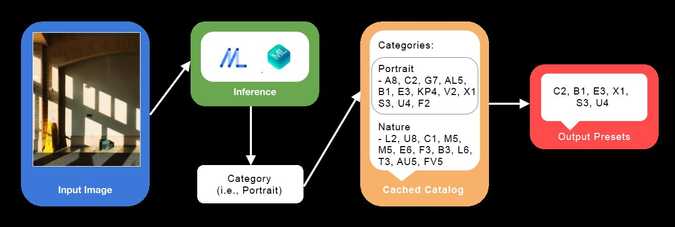

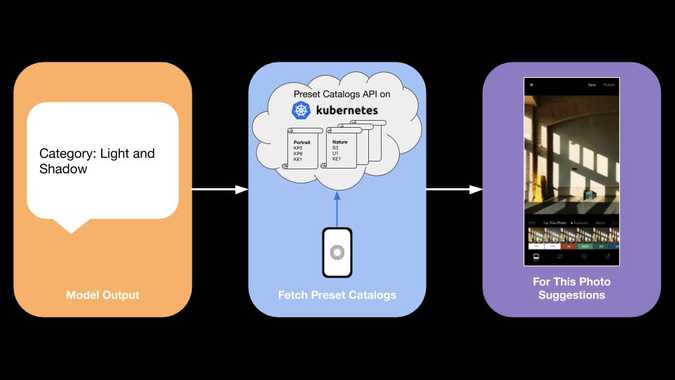

To understand the flow of how the “For This Photo” feature works when a user starts editing an image, we will walk through the steps that take place:

- When a user loads the image in the Edit View of our photo editor, inference (prediction) via the model is instantly kicked off.

- After inference, the model returns a category for the image, such as “portrait” or “urban”.

- The ID of the image category is then matched with the corresponding ID in the cached catalog which contains a predefined list of presets for each category.

- Six presets are chosen from the list of presets that are retrieved, some of which are available to everyone and some that are available only with a VSCO Membership. These presets end up in the “For this Photo” tray in our photo editor.

Showing free and paid presets is important to us in order to provide value to everyone who uses VSCO, while also demonstrating the value of membership to them. To this end, the presets that are part of VSCO membership can still be previewed by non-members. For members, it has the benefit of educating them on the type of images their member-only presets can be used on.

On-device ML ensures accessibility, speed, and privacy

From the get-go, we knew server-based machine learning was not an option for this feature. There were three main reasons we wanted this feature to use on-device ML: offline editing, speed, and privacy.

First, we did not want to limit our members’ creativity by offering this feature only when they were online. Inspiration can strike anywhere – someone could be taking and editing photos with limited network connectivity, in the middle of a desert, or high up on a mountain.

Second, we wanted to ensure editing would be fast. If we offered “For This Photo” with the ML model in the cloud, we’d have to upload users’ images to categorize them (which takes time, bandwidth, and data), and users would have to download the presets, making the process slow on a good connection and impossible on a poor one. Doing the ML on-device means that everything happens locally, quickly, with no connection required. This is crucial for helping users capture the moment and stay in the creative flow.

Third, the editing process is private. A server-side solution would require us to upload user photos while the users were still editing them before they published them. We wanted to be cognizant of our users’ privacy in their creative process.

Overview of VSCO’s Machine Learning Stack

The three main components of our ML tech stack are: TensorFlow, Apache Spark, and Elasticsearch. We use TensorFlow for deep learning on images, Apache Spark for shallow learning on behavioral data, and Elasticsearch for search and relevance.

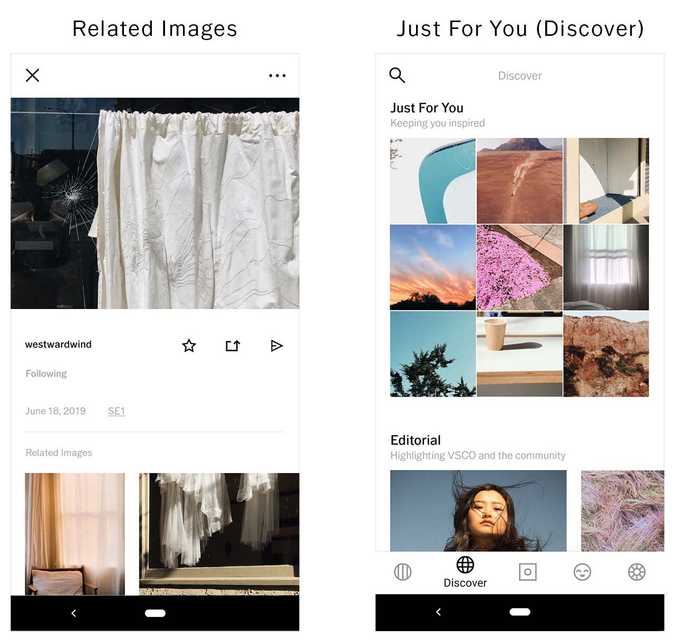

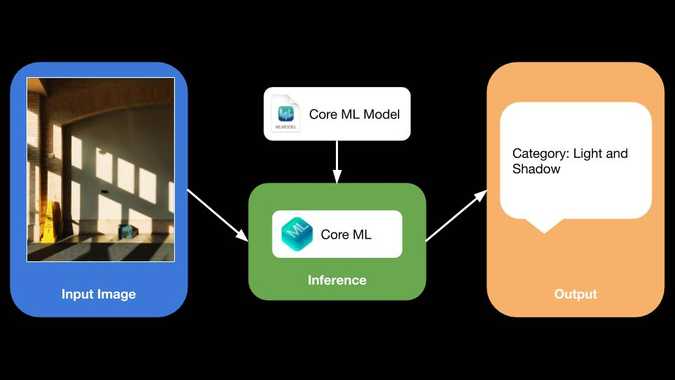

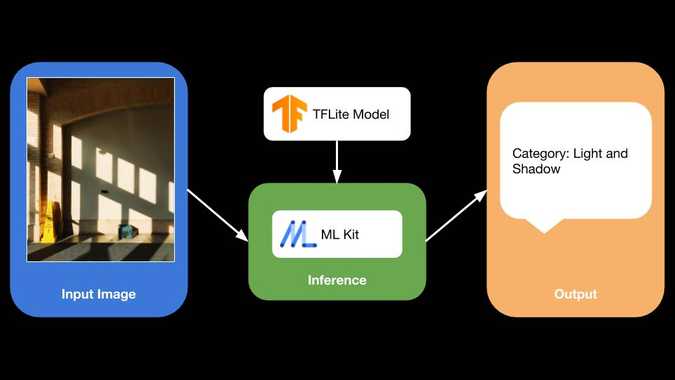

We have a real-time cloud-based inference pipeline using TensorFlow Serving that runs every image uploaded to VSCO through various convolutional neural networks in real-time. The inference results of these models are then used for product features like Related Images, Search, Just For You, and other sections in Discover, etc. For on-device machine learning, we use ML Kit + TensorFlow Lite on Android and Core ML on iOS for on-device inference. We’ll get into the details of this part of our stack in the next section.

We also have an Apache Spark-based recommendation engine that allows us to train models on large datasets from different sources in our data store: image metadata, behavioral events, and relational data. We then use the results of these models to serve personalized recommendations in various forms, like our User Suggestions feature.

To power our ML pipeline, we use Apache Kafka for log-based distributed streaming of data that serves as input for all our ML models, and Kubernetes for deploying all our micro-services. The languages we use include Python, C++, Go, Scala, and for on-device integrations, we use Java/Kotlin and Swift/Obj-C.

“For This Photo” - Technical Implementation

Step One: Categorizing Images

In order to build a model that serves the “For This Photo” feature, we wanted to be able to first assign a category to the image and then suggest presets that were designed to work well for that category.

We started with image data tagged by our in-house human curators. These curators are photography experts who have a front-row seat to our user behavior, so they know better than anyone what type of content is being shared and what trends are developing. They helped our engineering team come up with image categories for our model, which include: art, portrait, vibrant, coastal, nature, architecture, light and shadow, monochrome, and many more. As the saying goes, 90% of machine learning is about cleaning up your data. These steps helped take care of making sure the data our model was based on was reliable.

Using this categorized dataset, we trained a CNN model in TensorFlow based on SqueezeNet architecture. We chose this architecture because of its smaller size without much loss in accuracy compared to other architectures.

ML Kit on Android

Knowing that we wanted to do on-device machine learning with our custom model, TensorFlow Lite was an obvious choice due to the ease of taking a model trained on the server and converting it to a model compatible for a phone (.tflite format) by using the TFLiteConverter.

Also, we had already experienced the success of TensorFlow and TensorFlow Serving in production systems for our server-side ML. TensorFlow’s libraries are designed with running ML in production as a primary focus so we felt TensorFlow Lite will be no different.

We used ML Kit to run inference on a TensorFlow Lite model. ML Kit provided higher-level APIs for us to take care of initializing and loading a model as well as running inference on images without having to deal with the lower level TensorFlow Lite C++ libraries directly. This made the development process much faster and left us with more time to hone our model instead, causing us to take the feature from prototype to production-ready fairly quickly.

Categorizing an image - Android

Categorizing an image - Android

We converted this trained model from TensorFlow’s Saved Model format to TensorFlow Lite (.tflite) format using the TFLiteConverter for use on Android. Some of the causes of our initial bugs in this stage were due to the mismatch in the version of TFLiteConverter we used compared to the version of TensorFlow Lite library that ML Kit had a reference to via Maven. The ML Kit team was very helpful in helping us fix these issues as they came up.

graph_def_file = “model_name.pb”

input_arrays = [“input_tensor_name”] // this array can have more than one input names if the model requires multiple inputs

output_arrays = [“output_tensor_name”] // this array can have more than one input names if the model has multiple outputs

converter = lite.TFLiteConverter.from_frozen_graph(

graph_def_file, input_arrays, output_arrays)

tflite_model = converter.convert()

open(“converted_model.tflite”, “wb”).write(tflite_model)Python code for converting TensorFlow Saved Model to TFLite model using TFLiteConverter

Once we had a model that could categorize images, we bundled it into our app and ran inference on images with it pretty easily using ML Kit. Since we were using our own custom trained model, we used Custom Model API from ML Kit. For better accuracy, we decided to forgo the quantization step in model conversion and use a floating-point model. There were some challenges here because ML Kit assumes a quantized model by default. With a little effort, we changed some of the steps in model initialization to support a floating-point model.

// create a model interpreter for local model (bundled with app)

FirebaseModelOptions modelOptions = new FirebaseModelOptions.Builder()

.setLocalModelName(“model_name”)

.build();

modelInterpreter = FirebaseModelInterpreter.getInstance(modelOptions);

// specify input output details for the model

// SqueezeNet architecture uses 227 x 227 image as input

modelInputOutputOptions = new FirebaseModelInputOutputOptions.Builder()

.setInputFormat(0, FirebaseModelDataType.FLOAT32, new int[]{1, 227, 227, 3})

.setOutputFormat(0, FirebaseModelDataType.FLOAT32, new int[]{1, numLabels})

.build();

// create input data

FirebaseModelInputs input = new FirebaseModelInputs.Builder().add(imgDataArray).build(); // imgDataArray is a float[][][][] array of (1, 227, 227, 3)

// run inference

modelInterpreter.run(input, modelInputOutputOptions)Java code for running inference on our custom floating point model using Custom Model API

Core ML on iOS

For iOS, Core ML was the obvious choice for on-device ML. It’s been around for a couple years and is a very mature and easy-to-use library. Core ML provides high-level APIs all the way from converting the model to Core ML format to the actual inference on images on-device. While running inference, Core ML allows the app to get the maximum possible performance using the hardware acceleration provided by Apple’s neural engine.

Apple’s Core ML Tools made it very easy to convert our server-trained model into Core ML (.mlmodel) format. Since this converter supports Keras and Caffe model types but not TensorFlow’s Saved Model type, we decided to use a Caffe model we had trained in the SqueezeNet format. The best part was that this converter even let us specify the channel-wise bias as well as channel order for the input image that the model needed. This made these pre-processing steps part of the converted model; meaning we didn’t have to pre-process the images in our app before feeding them into the model during inference.

## Conversion script for Caffe —> Core ML. Includes switching of RGB color channels to BGR.

python

coreml_model = coremltools.converters.caffe

.convert((‘model_name.caffemodel’, ‘model_name.prototxt’),

class_labels = ‘labels.txt’,

image_input_names = ‘input’,

is_bgr = True,

red_bias = -123,

green_bias = -117,

blue_bias = -104)

coremltools.utils.save_spec(coreml_model, ‘model_name.mlmodel’)Python code for converting Caffe Model to Core ML (.mlmodel) model using CoreMLTools

Once we had a model that could assign categories to images, Core ML made it pretty straightforward to bundle this model into our app and run inference on images with it. Adding the model to our Xcode project was as simple as dragging the model into the project navigator. Core ML automatically generates a Swift class that provides easy access to the ML model.

// set up a Vision request using the model

let request = VNCoreMLRequest(model: model, completionHandler: { [weak self] request, error in

self?.processClassifications(for: request, error: error)

})

// crop the image to dimensions needed by the model

request.imageCropAndScaleOption = .centerCrop

// run inference by running the VisionMLRequest

DispatchQueue.global(qos: .userInitiated).async {

let handler = VNImageRequestHandler(ciImage: ciImage, orientation: orientation)

do {

try handler.perform([self.classificationRequest])

} catch {

// catch error

}

}Swift code for running inference on our Core ML model

Step Two: Suggesting Presets

The next challenge was to suggest presets based on these categories of images. We collaborated with our in-house Imaging team who had created these presets to come up with lists of presets that work well for images in each of these categories. This process included rigorous testing on many images in each category (landscape, water, etc.) where we analyzed how each preset affected various colors. In the end, we had a curated catalog with tastefully chosen presets mapped to each category.

Suggesting presets for an image

Suggesting presets for an image

These curated catalogs are ever-evolving as we add new presets to our membership offering. In order to make it easier for us to update these lists on the fly, without the users having to update their app, we decided to store these catalogs on the server and serve them using an API, a microservice written in Go which the mobile clients check-in with periodically to make sure they have the latest version of the catalogs. The mobile clients cache this catalog and only fetch when there is a new version of the catalog available. However, this approach creates a “cold start” problem for users who do not connect to the internet before trying out this feature for the first time, not giving the app an opportunity to connect to the API and download these catalogs. To solve this, we decided to ship a default version of these catalogs with the app. This allows all users to be able to use this feature regardless of their internet connectivity, ensuring one of the original goals of this feature to begin with.

Results and Conclusion

With “For This Photo”, we accomplished our goal to make editing with presets easier to navigate. We believe that if our members don’t find value in getting new presets, their creative progress is being hindered. We wanted to do better. We wanted to help more users not just discover new presets, but zero in on the presets that best matched what they were working on.

At VSCO, we’re looking to ML to provide inspiration and trusted guidance. We want to continue to improve “For This Photo” to provide recommendations based on other image characteristics as well as the user’s community actions (e.g. follows, favorites, and reposts). We also want to provide greater context for those recommendations, and to encourage our community of creators to discover and help fuel the creative spark in each other.

Acknowledgments

Many people from multiple teams contributed to the development of this feature - Yuanzhen Li, Melinda Lu, Katherine Pioro, Eric Cheong, Viet Pham, Maggie Carson Jurow, RJ Simonian, Augustine Ortiz, and many others.

Thanks to Jenny Zheng, Zach Hodges, Lucas Kacher, and Jessica Jiang for copy editing and reviews.

If you find this kind of work interesting, come join us!